We recently ran a webinar focusing on AI adoption called “The AI Reality Check: What It Takes to Get Results in the Real World.” (watch it here), and we were very encouraged by the positive feedback we received from our very much human audience (although these days you can never be sure!).

But we could not help to wonder, what did the subject of our webinar think of it?

So, we fired up our trusty AI sidekick and provided it with the footage of our webinar, and gave it the following prompt:

Is the provided webinar a fair representation of AI, and what criticisms can you find?

The result? Surprisingly helpful. Slightly spicy. Very “robotically direct.”

Here is the feedback we got:

Where the representation is strong (and accurate)

1) AI value ≠ AI adoption

One of the clearest messages in the session is that simply “rolling out AI” doesn’t guarantee results. The webinar repeatedly separates tool adoption (licenses, pilots, new features turned on) from real business value (time saved, cost reduced, risk lowered, service improved).

Examples like shaving minutes off repetitive processes, automating admin-heavy tasks, and focusing on measurable outcomes came through strongly.

Why this is fair:

Across most organisations right now, the biggest predictor of AI success isn’t the sophistication of the model—it’s whether the business has clear processes, usable data, and the change management to make new ways of working stick.

2) Shadow AI is real—and growing

The discussion around employees using tools like ChatGPT outside approved environments (“shadow AI”) resonated as both realistic and timely. The webinar’s examples—such as sensitive information being pasted into public tools, or work being done outside visibility—reflect what many leaders are only just starting to uncover.

Why this is fair:

Most businesses already have AI usage happening today—whether sanctioned or not. Positioning shadow AI as a governance and enablement issue (rather than a “people doing the wrong thing” issue) is pragmatic and aligns with what organisations are seeing in the real world.

3) Data quality and permissions matter more than models

A repeated theme is that AI performance is often constrained less by the AI itself and more by what it’s being fed—things like:

- inconsistent data hygiene

- unclear classification and ownership

- overly broad or irrelevant context

- SharePoint and file sprawl that has built up over years

One particularly strong point: AI doesn’t create new access—it makes existing access far more visible and usable, which means messy permissions can become obvious fast.

Why this is fair:

This matches what’s happening in many Copilot-style deployments: AI accelerates outcomes, but it also accelerates exposure of underlying data problems.

4) Narrow, scoped use cases outperform “big AI projects”

The webinar consistently favours smaller, bounded wins over large, bespoke “AI transformation” programs. Examples like meeting notes, triage, internal agents, and workflow automation came up repeatedly—along with the idea that tight scope is one of the best forms of risk management.

Why this is fair:

Right now, platforms and models are moving quickly. Smaller use cases deliver value sooner, reduce uncertainty, and avoid building something that becomes obsolete before it’s fully adopted.

Where the representation could be expanded (and made even more useful)

5) Risk is emphasised more than upside (which is valuable—but not the whole story)

The webinar frames AI heavily through a lens of:

- cyber and compliance risk

- governance gaps

- model failure modes (hallucinations, bias, misuse)

- exposure through uncontrolled usage

All of that is valid—and for many organisations, it’s the right place to start.

What could be added for more balance:

Alongside risk, more attention could be given to opportunity-focused angles, such as:

- revenue growth use cases

- customer-facing innovation

- competitive differentiation

- safe experimentation in low-risk domains

That balance helps leaders come away with the message: AI is powerful when guided—and risky when unmanaged, rather than simply “AI is risky.”

6) Agent orchestration is impressive—but not always “easy” in practice

The webinar’s explanation of multi-agent orchestration (orchestrator → workers → validators → security checks) is technically sound and genuinely exciting. It’s a glimpse into where AI is heading.

Where you could have added a practical caveat:

It could help to emphasise that orchestrated systems often bring:

- operational complexity

- monitoring and maintenance overhead

- debugging challenges

- higher skill requirements for safe deployment

AI lowers barriers, but robust orchestration still benefits from experienced oversight.

7) “Anyone can do this” is directionally true—with the right guardrails

The webinar shares an empowering message: non-developers can build useful tools, AI can teach you, and the learning curve doesn’t have to be steep.

Suggested strengthening of nuance:

This works best when:

- scope is tight

- data is clean and accessible

- guardrails are defined

- someone experienced is supervising

Without these conditions, organisations can still create fragile, insecure, or hard-to-maintain systems—just faster than before.

8) The model landscape is accurate—but practical differences could be highlighted

The webinar correctly notes that many AI products sit on a small number of foundation models (OpenAI, Anthropic, Google, and others). That simplification is helpful at an executive level.

Suggestion of adding a “leader’s lens”:

A quick layer on what materially changes between model choices could add value, including:

- latency and cost differences

- quality evaluation and benchmarking

- governance tooling maturity

- sovereignty considerations beyond “US vs AU hosting”

- open-weight vs closed-weight trade-offs

Even a high-level overview can help decision-makers ask smarter questions.

Two topics that deserve more airtime in most AI conversations

9) Organisational change is often harder than the technology

Training and internal champions are mentioned, but the AI suggests this could be explored further—because it’s frequently where AI programs stall.

Areas worth spotlighting:

- adoption anxiety and fear of “replacement”

- concerns about deskilling

- role redefinition and workflow redesign

- building confidence through enablement, not pressure

10) Measurement after deployment (not just upfront ROI)

The webinar reinforces the importance of defining ROI early—which is excellent. However, I suggest building on that by discussing what comes next, such as:

- ongoing measurement and feedback loops

- drift detection

- silent failure modes (where AI is “working” but producing poorer outcomes)

- knowing when to adjust, pause, or turn AI off

This is quickly becoming a hallmark of mature AI programs.

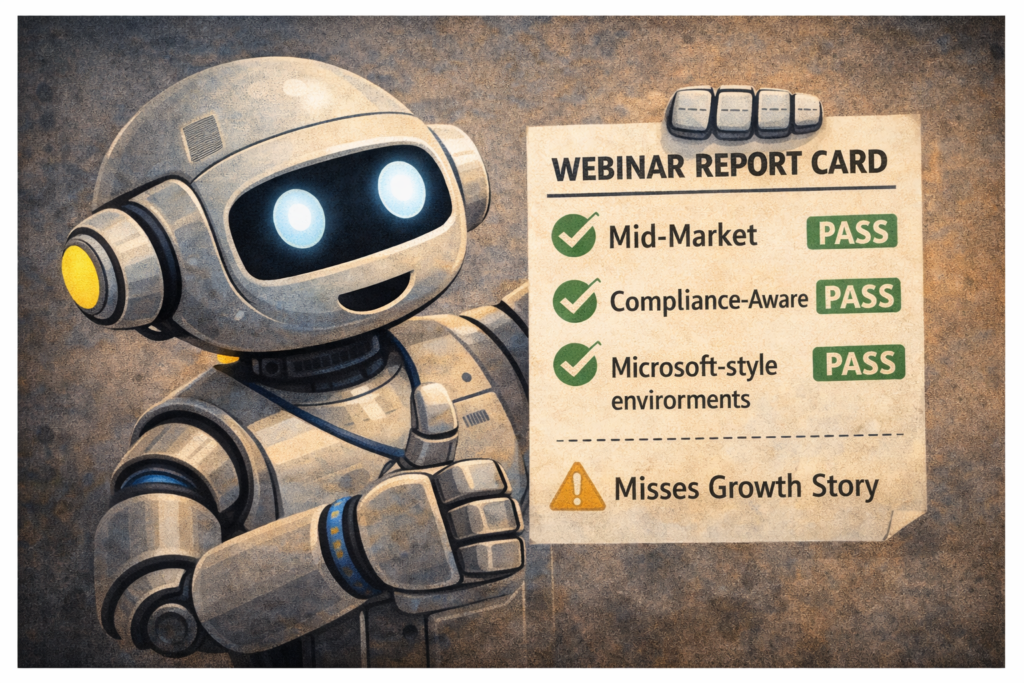

The bottom line

✅ Is the webinar a fair representation of AI today?

Yes—especially for mid-market, compliance-aware organisations operating in Microsoft-style environments.

⚠️ Is it the complete story?

Not entirely. It prioritises:

- risk over opportunity

- operational wins over strategic transformation

- practical MSP-friendly patterns over frontier experimentation

How to best interpret it:

It’s an excellent corrective to AI hype—and it becomes even stronger when paired with a narrative around:

- growth and differentiation

- safe experimentation

- customer-facing innovation

- longer-term competitive advantage

Want to watch the webinar on demand?

If you missed “The AI Reality Check: What It Takes to Get Results in the Real World,” you can still access it on demand. We encourage you to check it out and perhaps share some human feedback and opinions on this incredibly timely topic!

If you’re trying to move from AI curiosity to real outcomes, without chaos, unmanaged risk, or wasted spend, BITS can help you identify the right use cases, put guardrails in place, and build a roadmap that actually delivers.